Squeeze Every Drop of VRAM: Running Z-Image-Turbo-ControlNet on Low-End GPUs

Z-Image has been out for a while, and everyone praises its quality. But its limitation is it only does text-to-image, and text-based control over character poses is not great. The turbo version can generate images quickly for more sampling, but we still wish Z-Image-Edit would come out soon — it would be even better if it supported multi-image references.

Before Z-Image-Edit arrives, we can try using Z-Image-Turbo-Fun-Controlnet-Union for pose control. Pose control is a mature technique in text-to-image, well-established during the Stable Diffusion era. The open-source community's Z-Image Controlnet is built on the SD ecosystem, supporting:

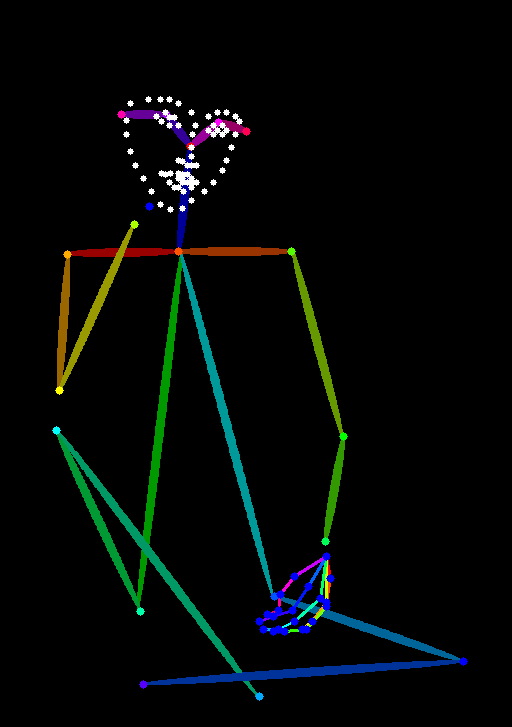

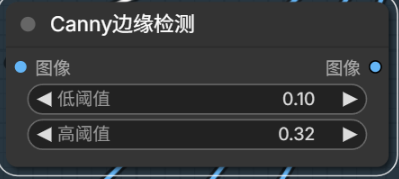

- Canny Edge Detection: Extracts hard edges from images, preserving edge features — similar to simple line drawings.

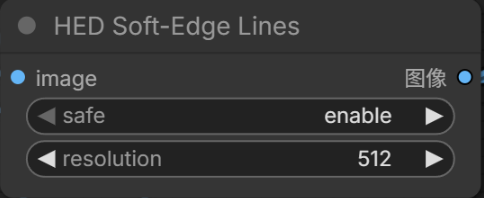

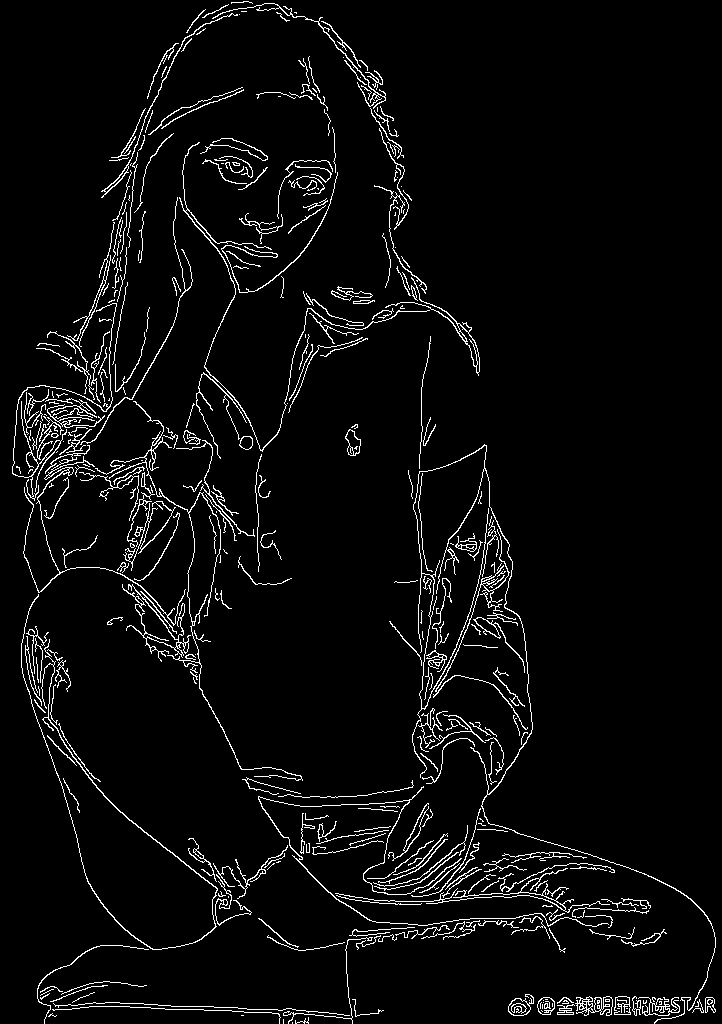

- HED Soft Edges: Compared to Canny, soft edges preserve transition information, closer to hand-drawn sketches.

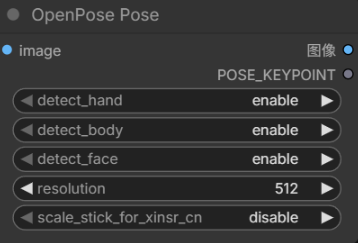

- OpenPose: The stick-figure skeleton maps that precisely control body poses.

- Depth Estimation: Single-channel depth maps using grayscale to represent 3D spatial positions.

- And many more

Download List

Using pose control requires downloading quite a few items.

First, download the Z-Image-Turbo-Fun-Controlnet-Union module — an additional module that stacks on top of the original Z-Image-Turbo, placed in the ComfyUI/models/model_patches directory. The preferred source is HuggingFace, but for those who prefer speed, searching on ModelScope for the most-downloaded version works great and is very fast.

Next are the ControlNet processing nodes. Besides Canny (built into ComfyUI), we need the aux preprocessor plugin for the others:

- Plugin install: https://github.com/Fannovel16/comfyui_controlnet_aux

Processing model downloads: Different processing nodes need their own models. The aux nodes default to auto-downloading from HuggingFace, which is a dealbreaker if you can't connect to the international internet. So you need to download these models yourself and place them in ComfyUI/custom_nodes/comfyui_controlnet_aux/ckpts. While you can find all-in-one packs on file-sharing sites, without a premium account those are a hassle — I chose to manually download from ModelScope.

Model Download Checklist

| Model | ModelScope Link |

|---|---|

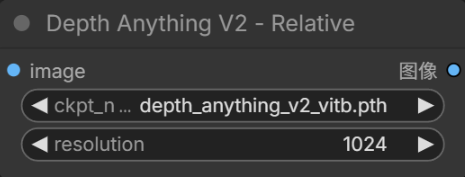

| depth_anything_v2_vitb | https://www.modelscope.cn/models/depth-anything/Depth-Anything-V2-Base |

| body pose | https://www.modelscope.cn/models/muse/annotator_body_pose_model |

| hand pose | https://www.modelscope.cn/models/muse/annotator_hand_pose_model |

| face pose (rename to facenet) | https://www.modelscope.cn/models/muse/annotator_face_pose_model |

| ControlNetHED | https://www.modelscope.cn/models/muse/ControlNetHED |

Workflow & Results

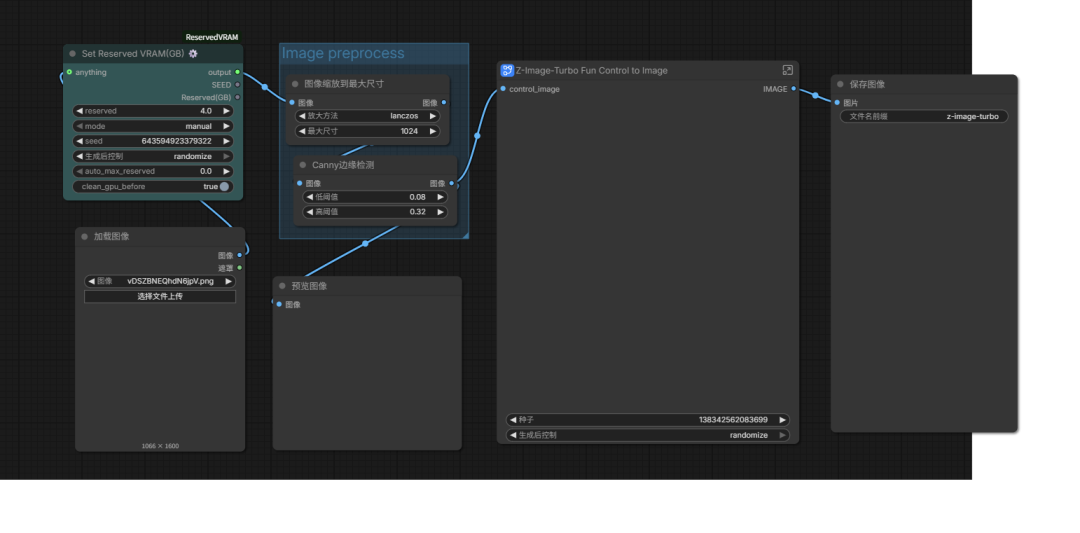

Now let's see the results. The workflow can be built using ComfyUI's official templates. However, with my 12GB entry-level GPU, loading the model and patches simultaneously causes OOM errors. I referenced a guide on running LTX2.3 on 12GB cards and added a VRAM control plugin (Set Reserved VRAM(GB) ⚙️) to make it work, though it's a bit slower (about 3 minutes per image on average):

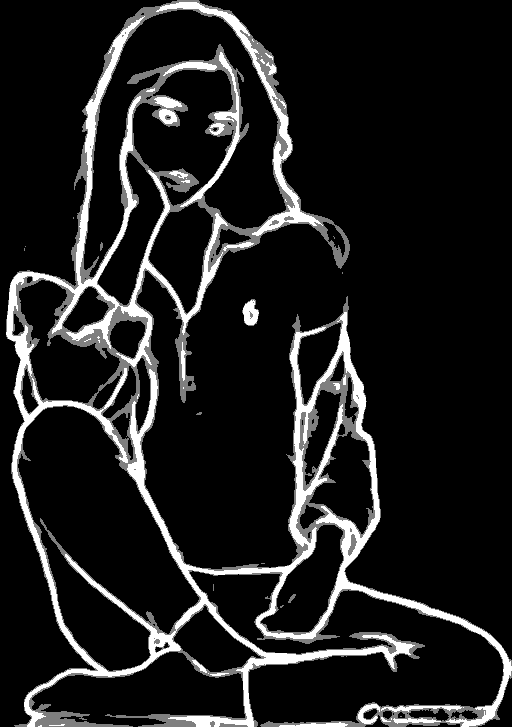

Canny edge detection is natively supported by ComfyUI — let's try it directly.

Next, try HED by replacing the Canny node with HED Soft-Edge Lines:

Depth Anything offers several model variants; I chose the base version:

This one seems to produce too much noise in the output — the control image has too much information, giving the generated images an oil-painting-like appearance.

OpenPose requires three detection models for face, hands, and body:

The stick-figure approach sometimes doesn't perfectly respect the original pose — the generated poses can shift slightly.

Summary

- Use Z-Image-Turbo-Fun-Controlnet-Union as a model patch for Z-Image to enable ControlNet

- Use the Set Reserved VRAM(GB) ⚙️ node to manage VRAM usage, enabling low-end GPU operation

- Use the comfyui_controlnet_aux plugin to access different ControlNet models

I found that Canny produces the highest-fidelity ControlNet inputs. There are still many pose-generation nodes I haven't tried, mostly because I couldn't find quick download sources.

Different nodes perform differently for real photos vs. cartoon characters, and different poses yield different results. Try them out and share your favorite pose-control model!