Z-Image vs ERNIE-Image: An In-Depth Comparison of Two Major Open-Source Models

Abstract: The open-source AI image generation landscape in 2025 welcomed two heavyweight contenders — Z-Image from Zhipu and ERNIE-Image from Baidu. Both are built on the DiT architecture and each excels along its own design path. This article presents an in-depth comparison across six dimensions — architecture, quality, speed, prompt following, fine-tuning capability, and use-case fit — to help readers make informed choices based on their actual needs.

I. Introduction: Two Titans of Open-Source Image Generation

As proprietary models dominate the commercial ecosystem, the open-source community urgently needs truly viable, high-performance alternatives. In 2025, two leading Chinese AI companies independently released their large-parameter open-source image generation models:

| Z-Image | ERNIE-Image | |

|---|---|---|

| Developer | Zhipu AI | Baidu |

| Parameter Scale | 6B | ~6B (DiT backbone) |

| License | Apache 2.0 | Open-source |

| Core Architecture | S3-DiT (single-stream) | DiT + Prompt Enhancer (single-stream) |

Both chose the single-stream DiT route, abandoning the high VRAM overhead of earlier dual-stream designs — a critical step toward practical deployability. However, their technical choices and optimization directions differ, giving each model a distinctly different character.

II. Architecture Comparison: S3-DiT vs DiT + Prompt Enhancer

2.1 Z-Image: S3-DiT Single-Stream Architecture

Z-Image adopts a proprietary S3-DiT (Single-stream Scalable DiT) architecture, with the core design philosophy of "preserving high-quality feature representation within a single-stream framework." Key features include:

- Single-stream design: Fuses text and image information into a single latent space for processing, significantly reducing VRAM consumption and inference latency compared to dual-stream architectures.

- Scalable structure: S3-DiT introduces an adaptive gating mechanism at the Transformer Block level, enabling more effective allocation of its 6B parameters across critical feature channels.

- Turbo distilled version: On top of the Base 50-step model, an 8-step Turbo variant was further distilled, achieving extreme speed while maintaining quality.

2.2 ERNIE-Image: DiT + Prompt Enhancer Dual-Engine

The architectural highlight of ERNIE-Image is its Prompt Enhancer module — a "model-external enhancement" approach:

- Single-stream DiT backbone: Responsible for converting enhanced prompts into high-quality images, structurally similar to mainstream single-stream DiT models.

- Prompt Enhancer: Performs semantic expansion and structured enhancement on the user's raw prompt input before inference, improving the model's comprehension of complex instructions.

- Native ComfyUI support: The Prompt Enhancer is integrated as a standalone node in ComfyUI, allowing users to toggle it on or off flexibly within their workflows.

2.3 Differences in Architectural Philosophy

| Dimension | Z-Image | ERNIE-Image |

|---|---|---|

| Optimization Direction | Internal model efficiency (better images in fewer steps) | Input-side enhancement (better prompt understanding) |

| Inference Pipeline | End-to-end, one-step | Prompt Enhancement → Image Generation (two-step) |

| Flexibility | Model as tool | Generation tunable via Enhancer parameters |

III. Quality Comparison: Realism, Text, and Style

3.1 Realism and Detail Fidelity

Community consensus: Z-Image performs better in photographic-level realism.

- Images generated by Z-Image are more natural in detail dimensions such as skin texture, light-shadow transitions, and object surface textures. Especially in portrait photography and product photography scenarios, the output is "production-ready" right out of the box.

- Z-Image's images maintain good clarity even after upscaling, with excellent noise control, making them suitable for workflows that require post-processing enlargement.

ERNIE-Image's realism is also strong, but it is slightly inferior to Z-Image in extreme detail rendering (e.g., hair strands, metallic reflections).

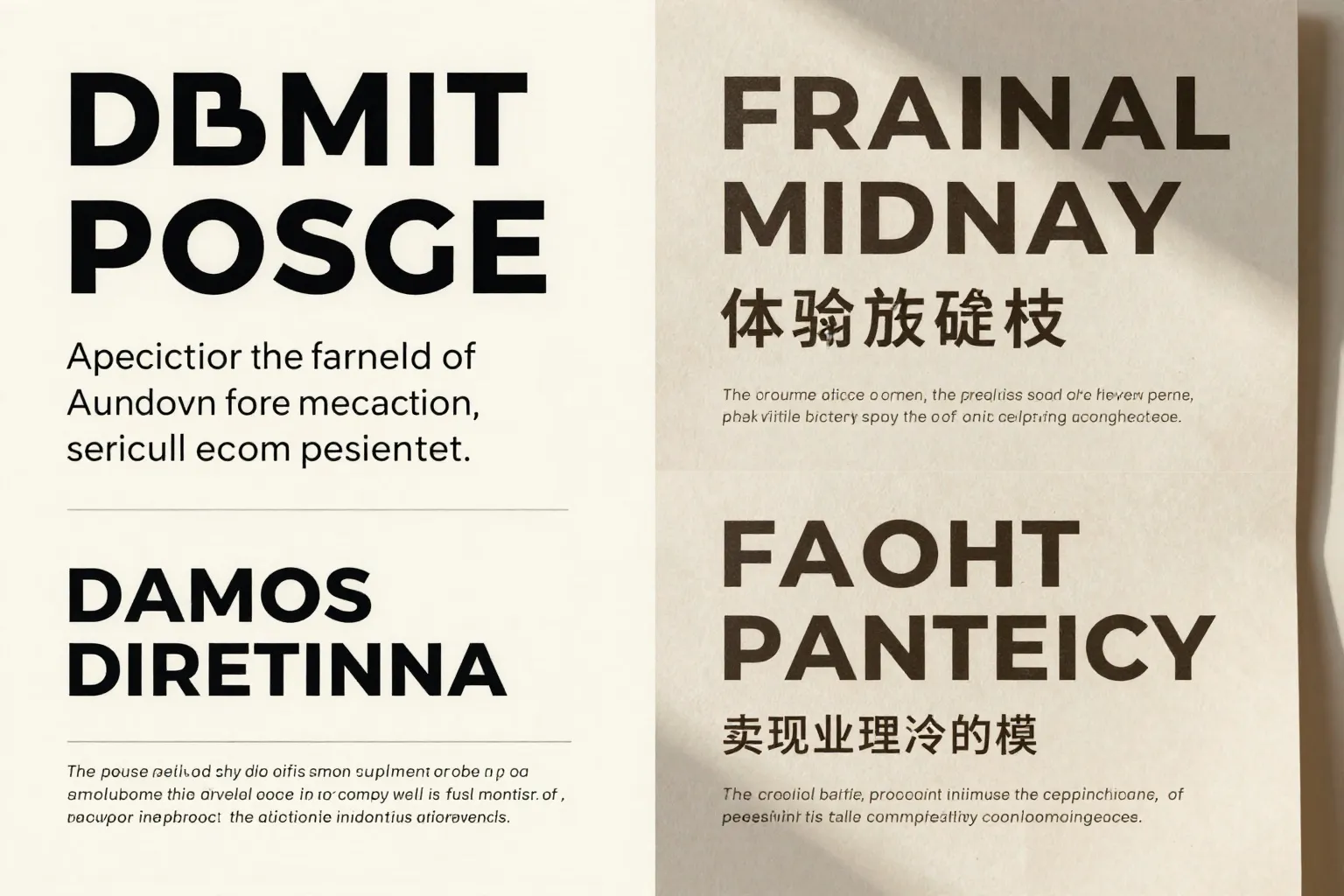

3.2 Text Rendering Capability

This is one of the most fiercely contested battlegrounds between the two models. The conclusion is not a simple "winner vs. loser" but rather各有优势 (each has its strengths):

| Dimension | Z-Image | ERNIE-Image |

|---|---|---|

| Benchmark Accuracy | Slightly lower | Leads by ~4% |

| Bilingual (CN/EN) Support | ✅ Native support | ✅ Supported |

| Text Clarity | Cleaner, fewer artifacts | Occasional stroke bleeding |

| Text Fidelity After Upscaling | Better | Average |

Practical experience:

- In standardized benchmarks, ERNIE-Image's text rendering accuracy leads by a narrow margin of approximately 4%.

- However, community feedback indicates that text in Z-Image outputs appears visually cleaner, with sharper stroke edges, and remains legible even after upscaling.

- In short: ERNIE-Image renders text more accurately; Z-Image renders text more beautifully.

3.3 Style Fidelity

This is a core strength area for ERNIE-Image.

- ERNIE-Image performs more stably on "specified artistic style" tasks (e.g., ink wash painting, cyberpunk, Studio Ghibli style, etc.), with higher consistency in output style.

- ERNIE Turbo outperforms Z-Image Turbo in cross-scheduler control, allowing users to maintain style consistency across different sampling strategies.

- Z-Image's performance on style tasks is "adequate but not stunning" — strong in photorealistic rendering, relatively weaker in artistic styles.

IV. Speed and VRAM Comparison

4.1 Inference Speed

| Version | Generation Steps | Relative Speed | Notes |

|---|---|---|---|

| Z-Image Turbo | 8 steps | ⚡ Fastest | Extremely fast after distillation |

| ERNIE-Image Turbo | Fewer steps | Fast | But affected by extra Prompt Enhancer overhead |

| Z-Image Base | 50 steps | Standard | Balanced quality and speed |

| ERNIE-Image Base | Standard steps | Standard | Base version |

Z-Image holds the advantage in pure inference speed, for two reasons:

- The 8-step Turbo distillation effect is significant, greatly compressing inference time.

- No additional Prompt Enhancer pre-step means lower end-to-end latency.

4.2 VRAM Usage

Both models are 6B-parameter single-stream DiT models with similar VRAM footprints, and both are deployable on consumer-grade GPUs:

- Z-Image: Single-stream design + fewer inference steps = slightly lower peak VRAM usage.

- ERNIE-Image: Requires additionally loading the Prompt Enhancer module, resulting in slightly higher VRAM usage, but the difference is manageable.

Bottom line: On consumer-grade GPUs (16GB VRAM), both can run inference in FP16. Z-Image's 8-step Turbo mode offers better value.

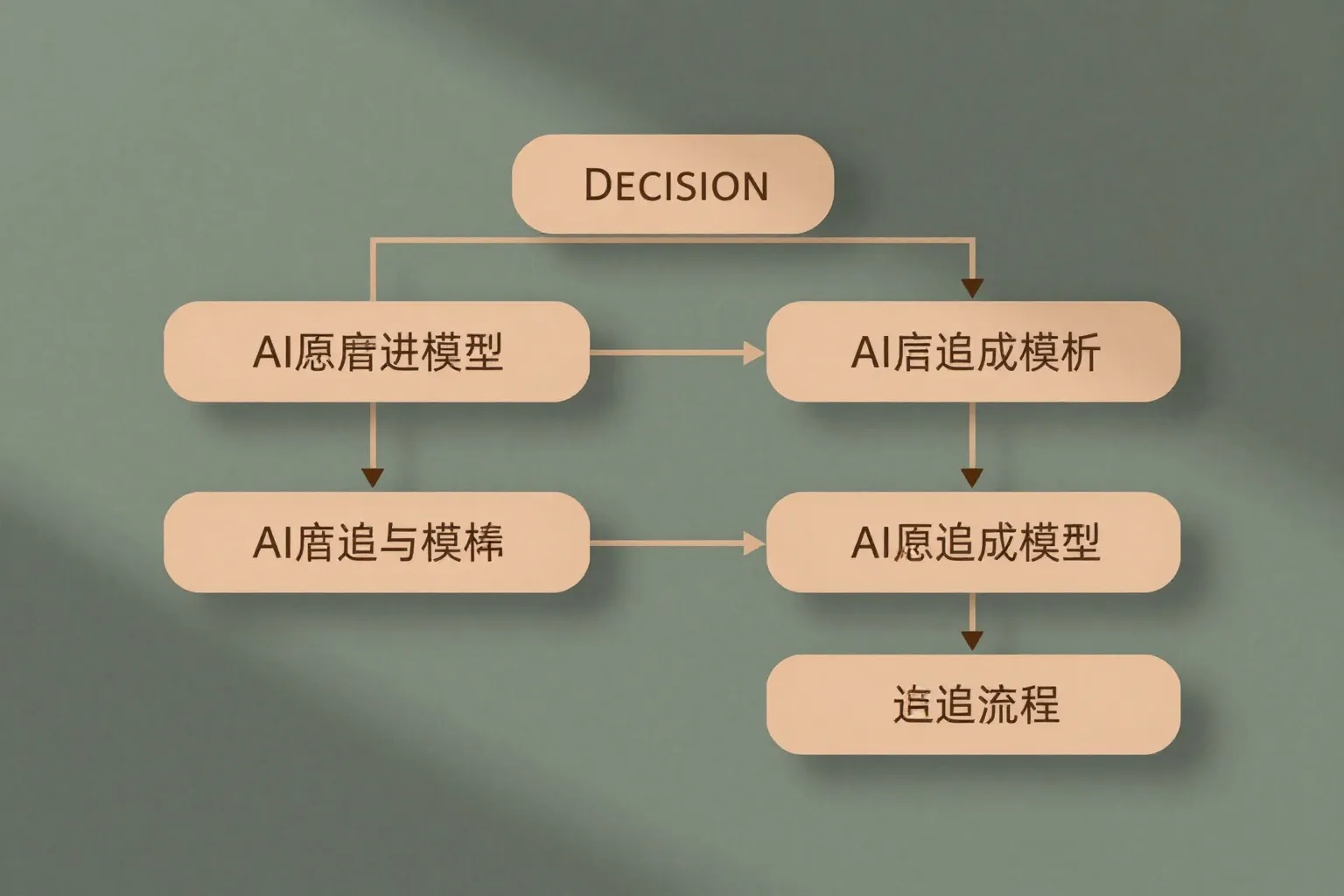

V. Prompt Following and Fine-Tuning Capability

5.1 Prompt Following

ERNIE-Image wins.

- ERNIE-Image's Prompt Enhancer module essentially acts as a "translator and expander" of user intent, converting short prompts into structured descriptions that the model can more easily understand, thus demonstrating stronger following capability on complex instructions (multiple subjects, multiple relationships, spatial constraints).

- Community benchmarks show that in scenarios where prompts contain three or more elements, ERNIE-Image's instruction-following rate is noticeably higher than Z-Image's.

- ERNIE Turbo maintains excellent prompt-following capability across multiple schedulers, offering higher control flexibility.

Z-Image's prompt following capability is also first-tier, but in complex multi-element scenarios, it occasionally misses elements or imbalances element weights.

5.2 LoRA Fine-Tuning Support

| Dimension | Z-Image | ERNIE-Image |

|---|---|---|

| LoRA Fine-Tuning Support | ✅ Native support | ⚠️ Limited support |

| Community Ecosystem | Active, rich LoRA resources | Relatively scarce |

| Fine-Tuning Difficulty | Low, mature workflow | Requires self-adaptation |

Z-Image is clearly ahead in customizability.

Z-Image supports a standard LoRA fine-tuning workflow. The community has accumulated a large number of style/character LoRA models, allowing users to quickly customize their own styles. ERNIE-Image's ecosystem building in this direction is still in its early stages.

VI. Use-Case Recommendations

Based on the above comparison, here are scenario-based model selection recommendations:

Choose Z-Image if your needs are:

- Photographic-quality realism: Product photography, portrait photography, e-commerce hero images, and other scenarios requiring a high degree of realism.

- Speed-first: Applications requiring batch image generation or sensitive to inference latency (e.g., real-time generation, online APIs).

- Text poster design: Generating images containing Chinese and English text, with text that remains sharp and clean.

- Custom styles/characters: Relying on LoRA fine-tuning to adapt to brand visuals or specific IP characters.

- VRAM-constrained environments: Running at lower cost on consumer-grade GPUs.

Choose ERNIE-Image if your needs are:

- Strong style control: Needing stable reproduction of specific artistic styles (ink wash, oil painting, cyberpunk, etc.).

- Complex prompts: Prompts containing multiple subjects, actions, and spatial relationships that require precise model comprehension.

- Text benchmark accuracy: Pursuing the highest text rendering accuracy in standardized evaluations.

- Workflow integration: Already deeply invested in the ComfyUI ecosystem and wanting to leverage the Prompt Enhancer node.

- Prompt exploration: Wanting to leverage the Enhancer to start from simple inputs and let the model "write good prompts for you."

VII. Conclusion: Each Has Its Strengths, Choose by Need

| Dimension | Z-Image Advantage | ERNIE-Image Advantage |

|---|---|---|

| Architecture | S3-DiT, end-to-end efficient | DiT + Enhancer, input enhancement |

| Realism | ⭐⭐⭐⭐⭐ Superior | ⭐⭐⭐⭐ |

| Text Rendering | Cleaner, sharper after upscaling | Benchmark accuracy ~4% higher |

| Style Fidelity | ⭐⭐⭐⭐ | ⭐⭐⭐⭐⭐ Superior |

| Speed | ⭐⭐⭐⭐⭐ 8-step Turbo | ⭐⭐⭐⭐ |

| VRAM | ⭐⭐⭐⭐⭐ Lower | ⭐⭐⭐⭐ |

| Prompt Following | ⭐⭐⭐⭐ | ⭐⭐⭐⭐⭐ Stronger |

| LoRA Fine-Tuning | ⭐⭐⭐⭐⭐ Mature ecosystem | ⭐⭐⭐ |

| License | Apache 2.0 (permissive) | — |

One-Sentence Summary

Z-Image is the representative of "efficiency and realism" — faster, leaner, more photorealistic, and its LoRA ecosystem makes it highly customizable;

ERNIE-Image is the representative of "understanding and control" — stronger prompt comprehension, better style stability, and higher text benchmark accuracy.

The two are not substitutes but complements. In real-world production, teams can absolutely deploy both models simultaneously, intelligently routing tasks based on specific requirements to achieve the optimal combination of quality and efficiency. The prosperity of the open-source ecosystem is precisely reflected in this "blooming diversity" of competition.

This article is based on publicly available information and community benchmarks from 2025. Model capabilities continue to evolve; please refer to the latest official releases for the most up-to-date information.