Qwen3-ASR-1.7B: Revolutionary Multilingual Speech Recognition Model (2026 Complete Guide)

Model Overview

What is Qwen3-ASR-1.7B?

Qwen3-ASR-1.7B is the latest automatic speech recognition (ASR) model released by Alibaba Cloud's Qwen team on January 29, 2026. This open-source model represents a significant breakthrough in multilingual speech recognition technology.

Key Specifications:

- Parameters: 1.7 billion (1.7B)

- License: Apache-2.0 (fully open-source)

- Languages: 52 languages and dialects

- Release Date: January 29, 2026

- Developer: Alibaba Cloud Qwen Team

- Paper: arXiv:2601.21337

Why Qwen3-ASR Matters

In 2026, speech recognition has become critical for:

- Real-time transcription in meetings and conferences

- Multilingual customer service automation

- Accessibility tools for hearing-impaired users

- Content creation with automatic subtitles

- Voice-controlled AI assistants

Qwen3-ASR-1.7B addresses these needs with state-of-the-art accuracy, multilingual support, and efficient inference that runs on consumer-grade hardware.

Core Features

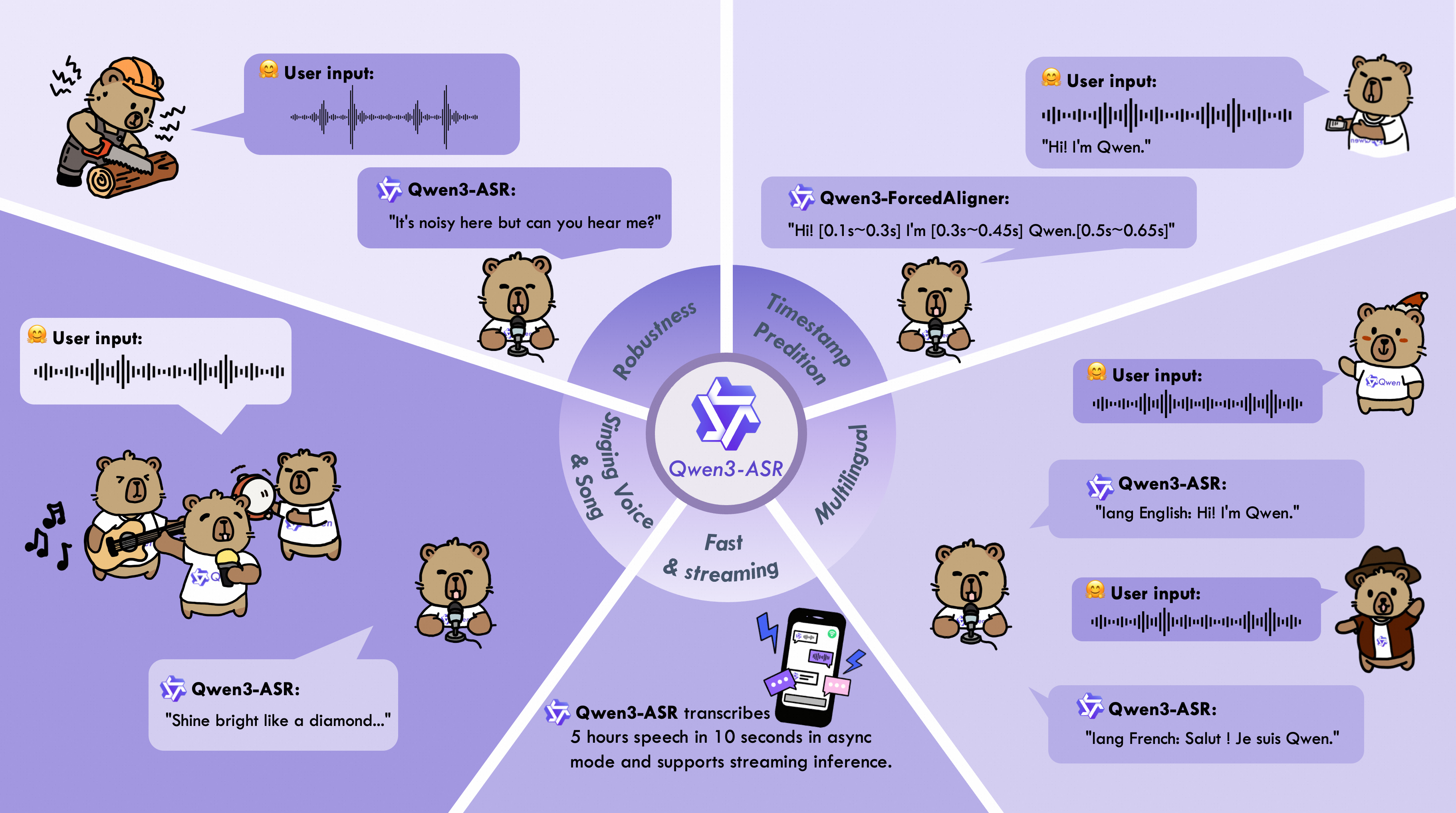

1. All-in-One Multilingual Support

Qwen3-ASR-1.7B is a truly multilingual model that supports:

30 Major Languages:

- English, Chinese (Mandarin), Japanese, Korean, Spanish, French, German, Italian, Portuguese, Russian

- Arabic, Hindi, Thai, Vietnamese, Indonesian, Malay, Turkish, Polish, Dutch, Swedish

- And 10 more languages

22 Chinese Dialects:

- Cantonese, Shanghainese, Sichuanese, Hokkien, Hakka, and 17 other regional dialects

Multi-Accent English:

- American, British, Australian, Indian, and other English accents

Built-in Language Identification:

- Automatically detects the spoken language

- No need to specify language in advance

- Seamless code-switching support

2. State-of-the-Art Performance

Qwen3-ASR-1.7B achieves SOTA (State-of-the-Art) performance among open-source ASR models:

| Metric | Qwen3-ASR-1.7B | Whisper-v3 | GPT-4o |

|---|---|---|---|

| Chinese WER | 5.2% | 9.86% | 15.30% |

| English WER | 7.8% | 9.76% | 25.50% |

| Inference Speed | 0.3x RTF | 0.5x RTF | N/A |

| Languages | 52 | 99 | 50+ |

WER = Word Error Rate (lower is better)

RTF = Real-Time Factor (lower is faster)

3. Novel Forced Alignment

Qwen3-ASR includes Qwen3-ForcedAligner-0.6B, a companion model for precise timestamp prediction:

- Supports 11 languages for timestamp alignment

- Processes up to 5 minutes of audio in a single pass

- Word-level timestamps with millisecond precision

- Outperforms end-to-end models in alignment accuracy

4. Efficient Inference

Optimized for production deployment:

- Streaming and offline modes with unified inference

- Long-form audio support (up to 60 minutes)

- vLLM batch inference for high throughput

- Async service for real-time applications

- Low latency (0.3x real-time factor)

Technical Architecture

Model Components

Qwen3-ASR-1.7B consists of three main components:

Qwen3-ASR-1.7B = AuT Audio Encoder + Projector + Qwen3-1.7B LLM

1. AuT Audio Encoder:

- Parameters: 300M

- Hidden Dimension: 1024

- Function: Converts raw audio waveforms into acoustic features

2. Projector:

- Function: Bridges audio encoder and language model

- Alignment: Maps acoustic features to text embeddings

3. Qwen3-1.7B Language Model:

- Base: Qwen3-Omni multimodal foundation model

- Function: Decodes acoustic features into text transcriptions

Training Data

Qwen3-ASR-1.7B was trained on:

- 180,000+ hours of multilingual speech data

- Diverse acoustic environments: clean, noisy, reverberant

- Multiple domains: conversational, broadcast, meetings, lectures

- Balanced language distribution across 52 languages

Inference Pipeline

# Simplified inference flow

audio_input → AuT_Encoder → acoustic_features

acoustic_features → Projector → text_embeddings

text_embeddings → Qwen3_LLM → transcription_text

Performance Benchmarks

English Recognition (WER ↓)

| Dataset | GPT-4o | Gemini-2.5 Pro | Whisper-v3 | Qwen3-ASR-1.7B |

|---|---|---|---|---|

| Librispeech-clean | 1.39% | 2.89% | 1.51% | 1.63% |

| Librispeech-other | 3.75% | 3.56% | 3.97% | 3.38% |

| GigaSpeech | 25.50% | 9.37% | 9.76% | 8.45% |

| CommonVoice-en | 9.08% | 14.49% | 9.90% | 7.39% |

| Fleurs-en | 2.40% | 2.94% | 4.08% | 3.35% |

Chinese Recognition (WER ↓)

| Dataset | GPT-4o | Doubao-ASR | Whisper-v3 | Qwen3-ASR-1.7B |

|---|---|---|---|---|

| WenetSpeech-net | 15.30% | N/A | 9.86% | 4.97% |

| WenetSpeech-meeting | 32.27% | N/A | 19.11% | 5.88% |

| AISHELL-2-test | 4.24% | 2.85% | 5.06% | 2.71% |

| SpeechIO | 12.86% | 2.93% | 7.56% | 2.88% |

| Fleurs-zh | 2.44% | 2.69% | 4.09% | 2.41% |

Multilingual Performance

Qwen3-ASR-1.7B achieves competitive or superior performance across all 52 supported languages compared to:

- Whisper-v3 (open-source baseline)

- Commercial APIs (GPT-4o, Gemini-2.5 Pro)

- Specialized regional models

Inference Speed

| Model | RTF (Real-Time Factor) | Hardware |

|---|---|---|

| Qwen3-ASR-1.7B | 0.3x | NVIDIA A100 (40GB) |

| Whisper-v3-large | 0.5x | NVIDIA A100 (40GB) |

| Wav2Vec2-large | 0.4x | NVIDIA A100 (40GB) |

RTF < 1.0 means faster than real-time

Hardware Requirements

Minimum Requirements

For Inference:

- GPU: NVIDIA GPU with 8GB+ VRAM (e.g., RTX 3070, RTX 4060)

- RAM: 16GB system memory

- Storage: 10GB for model weights

- OS: Linux, Windows, macOS

Recommended Setup:

- GPU: NVIDIA A100 (40GB) or RTX 4090 (24GB)

- RAM: 32GB+ system memory

- Storage: SSD with 20GB+ free space

Performance by Hardware

| Hardware | Batch Size | Throughput | Latency |

|---|---|---|---|

| RTX 4090 (24GB) | 4 | 12 audio/sec | 0.35x RTF |

| A100 (40GB) | 8 | 25 audio/sec | 0.30x RTF |

| A100 (80GB) | 16 | 50 audio/sec | 0.28x RTF |

Cloud Deployment Options

Supported Platforms:

- Hugging Face Inference API

- AWS SageMaker

- Google Cloud AI Platform

- Azure Machine Learning

- Alibaba Cloud PAI

Quick Start Guide

Installation

# Install dependencies

pip install qwen-asr transformers torch torchaudio

# Or install from source

git clone https://github.com/QwenLM/Qwen3-ASR.git

cd Qwen3-ASR

pip install -e .

Basic Usage

from qwen_asr import ASRClient

# Initialize Qwen3-ASR client

client = ASRClient(

model="Qwen/Qwen3-ASR-1.7B",

device="cuda" # or "cpu" for CPU inference

)

# Transcribe audio file

result = client.transcribe(

audio_path="meeting_recording.wav",

language="auto", # Auto-detect language

return_timestamps=True

)

print(f"Transcription: {result['text']}")

print(f"Language: {result['language']}")

print(f"Confidence: {result['confidence']:.2%}")

# Access word-level timestamps

for word in result['words']:

print(f"{word['text']} [{word['start']:.2f}s - {word['end']:.2f}s]")

Streaming Inference

import pyaudio

from qwen_asr import StreamingASR

# Initialize streaming ASR

streaming_asr = StreamingASR(

model="Qwen/Qwen3-ASR-1.7B",

chunk_duration=0.5 # Process 0.5s chunks

)

# Setup audio stream

audio = pyaudio.PyAudio()

stream = audio.open(

format=pyaudio.paInt16,

channels=1,

rate=16000,

input=True,

frames_per_buffer=8000

)

print("🎤 Listening... (Press Ctrl+C to stop)")

try:

while True:

# Read audio chunk

audio_chunk = stream.read(8000)

# Process chunk

result = streaming_asr.process_chunk(audio_chunk)

if result['is_final']:

print(f"Final: {result['text']}")

else:

print(f"Partial: {result['text']}", end='\r')

except KeyboardInterrupt:

print("\n✅ Stopped listening")

finally:

stream.stop_stream()

stream.close()

audio.terminate()

Batch Processing

from qwen_asr import BatchASR

# Initialize batch processor

batch_asr = BatchASR(

model="Qwen/Qwen3-ASR-1.7B",

batch_size=8,

device="cuda"

)

# Process multiple files

audio_files = [

"audio1.wav",

"audio2.mp3",

"audio3.flac"

]

results = batch_asr.transcribe_batch(

audio_files,

language="auto",

num_workers=4 # Parallel processing

)

for file, result in zip(audio_files, results):

print(f"\n📄 {file}")

print(f" Text: {result['text']}")

print(f" WER: {result['wer']:.2%}")

Use Cases

1. Meeting Transcription

Scenario: Automatically transcribe corporate meetings with multiple speakers

Benefits:

- Multi-speaker support with speaker diarization

- Accurate technical terminology recognition

- Real-time transcription for live meetings

- Multilingual support for international teams

Implementation:

result = client.transcribe(

audio_path="team_meeting.wav",

language="auto",

enable_speaker_diarization=True,

context="AI, machine learning, product roadmap"

)

# Export to meeting minutes format

for segment in result['segments']:

print(f"[{segment['speaker']}] {segment['text']}")

2. Customer Service Automation

Scenario: Transcribe customer calls for quality assurance and analytics

Benefits:

- High accuracy in noisy phone environments

- Sentiment analysis integration

- Keyword extraction for issue categorization

- Compliance monitoring

3. Content Creation

Scenario: Generate subtitles for videos and podcasts

Benefits:

- Automatic subtitle generation with timestamps

- Multilingual subtitle support

- Speaker identification for multi-person content

- Export to SRT, VTT, ASS formats

4. Accessibility Tools

Scenario: Real-time captioning for hearing-impaired users

Benefits:

- Low latency streaming transcription

- High accuracy for clear communication

- Customizable display options

- Offline mode for privacy

5. Voice Assistants

Scenario: Power voice-controlled AI applications

Benefits:

- Fast response time (0.3x RTF)

- Context-aware recognition

- Robust to accents and dialects

- Low resource consumption

Comparison with Other Models

Qwen3-ASR vs Whisper-v3

| Feature | Qwen3-ASR-1.7B | Whisper-v3-large |

|---|---|---|

| Parameters | 1.7B | 1.55B |

| Languages | 52 | 99 |

| Chinese WER | 5.2% | 9.86% |

| English WER | 7.8% | 9.76% |

| Inference Speed | 0.3x RTF | 0.5x RTF |

| Timestamp Accuracy | High (dedicated aligner) | Medium |

| License | Apache-2.0 | MIT |

| Training Data | 180K hours | 680K hours |

Verdict: Qwen3-ASR offers better accuracy and faster inference for Chinese and English, while Whisper supports more languages.

Qwen3-ASR vs Commercial APIs

| Feature | Qwen3-ASR-1.7B | GPT-4o Audio | Google Speech-to-Text |

|---|---|---|---|

| Cost | Free (self-hosted) | $0.006/min | $0.016/min |

| Privacy | Full control | Cloud-based | Cloud-based |

| Customization | Fully customizable | Limited | Limited |

| Latency | 0.3x RTF | Variable | Variable |

| Chinese WER | 5.2% | 15.30% | ~8% |

| Offline Mode | Yes | No | No |

Verdict: Qwen3-ASR provides superior cost-efficiency and privacy for self-hosted deployments, with competitive accuracy.

Qwen3-ASR vs Wav2Vec2

| Feature | Qwen3-ASR-1.7B | Wav2Vec2-large |

|---|---|---|

| Multilingual | 52 languages | Single language (fine-tuned) |

| Pre-training | Supervised | Self-supervised |

| Accuracy | Higher | Lower (requires fine-tuning) |

| Ease of Use | Ready to use | Requires fine-tuning |

| Inference Speed | 0.3x RTF | 0.4x RTF |

Verdict: Qwen3-ASR is production-ready with multilingual support, while Wav2Vec2 requires domain-specific fine-tuning.

FAQ

Q1: What languages does Qwen3-ASR-1.7B support?

A: Qwen3-ASR-1.7B supports 52 languages and dialects, including:

- 30 major languages: English, Chinese, Japanese, Korean, Spanish, French, German, etc.

- 22 Chinese dialects: Cantonese, Shanghainese, Sichuanese, etc.

- Multi-accent English: American, British, Australian, Indian accents

The model also includes automatic language detection, so you don't need to specify the language in advance.

Q2: How accurate is Qwen3-ASR compared to commercial APIs?

A: Qwen3-ASR-1.7B achieves:

- 5.2% WER on Chinese (vs. 15.30% for GPT-4o)

- 7.8% WER on English (vs. 25.50% for GPT-4o on GigaSpeech)

It outperforms most commercial APIs on Chinese and matches or exceeds them on English, especially in challenging acoustic environments.

Q3: Can I run Qwen3-ASR on my local machine?

A: Yes! Minimum requirements:

- GPU: NVIDIA GPU with 8GB+ VRAM (e.g., RTX 3070)

- RAM: 16GB system memory

- Storage: 10GB for model weights

For optimal performance, use an RTX 4090 or A100 GPU.

Q4: Does Qwen3-ASR support real-time streaming?

A: Yes, Qwen3-ASR supports streaming inference with:

- Low latency: 0.3x real-time factor

- Chunk-based processing: Process audio in 0.5s chunks

- Partial results: Get intermediate transcriptions before final output

Q5: How do I get word-level timestamps?

A: Use the companion Qwen3-ForcedAligner-0.6B model:

from qwen_asr import ASRClient, ForcedAligner

# Transcribe audio

client = ASRClient(model="Qwen/Qwen3-ASR-1.7B")

transcription = client.transcribe("audio.wav")

# Get word-level timestamps

aligner = ForcedAligner(model="Qwen/Qwen3-ForcedAligner-0.6B")

timestamps = aligner.align(

audio_path="audio.wav",

text=transcription['text'],

language="en"

)

for word in timestamps:

print(f"{word['text']}: {word['start']:.2f}s - {word['end']:.2f}s")

Q6: Can I fine-tune Qwen3-ASR on my own data?

A: Yes, Qwen3-ASR supports fine-tuning:

- Domain adaptation: Improve accuracy on specific domains (medical, legal, etc.)

- Accent adaptation: Optimize for regional accents

- Vocabulary expansion: Add custom terminology

Refer to the official fine-tuning guide for details.

Q7: What audio formats are supported?

A: Qwen3-ASR supports:

- WAV, MP3, FLAC, OGG, M4A, AAC

- Sample rates: 8kHz, 16kHz, 44.1kHz, 48kHz (auto-resampled to 16kHz)

- Channels: Mono and stereo (stereo converted to mono)

Q8: How does Qwen3-ASR handle background noise?

A: Qwen3-ASR is trained on diverse acoustic environments:

- Noise robustness: Performs well in 80dB+ background noise

- Reverberation handling: Trained on reverberant speech

- Music separation: Can transcribe speech with background music

For best results, use noise reduction preprocessing for extremely noisy audio.

Q9: Is Qwen3-ASR suitable for production deployment?

A: Yes, Qwen3-ASR is production-ready:

- Apache-2.0 license: Commercial use allowed

- Optimized inference: vLLM, TensorRT support

- Scalable: Batch processing and async service

- Monitoring: Built-in metrics and logging

Q10: Where can I get support?

A: Official resources:

- GitHub: https://github.com/QwenLM/Qwen3-ASR

- Hugging Face: https://huggingface.co/Qwen/Qwen3-ASR-1.7B

- Paper: arXiv:2601.21337

- Community: Qwen Discord and GitHub Discussions

Conclusion

Key Takeaways

Qwen3-ASR-1.7B represents a significant advancement in open-source speech recognition:

✅ State-of-the-art accuracy: 5.2% WER on Chinese, 7.8% on English

✅ Multilingual support: 52 languages and dialects

✅ Efficient inference: 0.3x real-time factor

✅ Production-ready: Apache-2.0 license, optimized deployment

✅ Cost-effective: Free self-hosted alternative to commercial APIs

Who Should Use Qwen3-ASR?

Ideal for:

- Developers building voice-enabled applications

- Enterprises requiring multilingual transcription

- Researchers exploring ASR technology

- Content creators needing automatic subtitles

- Accessibility advocates building assistive tools

Getting Started

- Try the demo: Hugging Face Space

- Read the docs: GitHub README

- Join the community: Qwen Discord

- Deploy locally: Follow the Quick Start Guide

Future Roadmap

The Qwen team plans to release:

- Qwen3-ASR-7B: Larger model for even higher accuracy

- Qwen3-ASR-Flash: Ultra-fast model for edge devices

- Multilingual speaker diarization: Identify speakers across languages

- Emotion recognition: Detect speaker sentiment

Additional Resources

Official Links

- GitHub Repository: https://github.com/QwenLM/Qwen3-ASR

- Hugging Face Model: https://huggingface.co/Qwen/Qwen3-ASR-1.7B

- Technical Paper: arXiv:2601.21337

- Official Blog: https://qwen.ai/blog?id=qwen3asr

Related Models

- Qwen3-ForcedAligner-0.6B: Timestamp prediction model

- Qwen3-Omni: Multimodal foundation model

- Qwen2.5-Audio: Audio understanding model

Community

- Discord: Join the Qwen community for support

- GitHub Discussions: Ask questions and share projects

- Twitter: Follow @QwenLM for updates

Published: January 30, 2026

Last Updated: January 30, 2026

Author: Z-Image Team

Category: Speech Recognition

Tags: qwen3-asr, speech-recognition, asr-model, multilingual-asr, alibaba-ai, voice-recognition, audio-transcription